Working as an SE at a continuous pentesting company means I walk through a lot of environments. Different industries, different stacks, different maturity levels. The one constant lately is that almost every customer wants to talk about AI, and almost every conversation eventually lands on the same question: where is it actually helping defenders right now?

The honest answer is that AI has moved past the marketing slides. Large language models, modern embeddings, and purpose-built ML pipelines are showing up in real workflows for analysts, threat hunters, and security engineers. That matters because attackers are not waiting. We see it on our side of the engagement every week. Phishing kits write better English. Recon is industrialized. Malware authors generate more convincing lures with less effort. Defenders who do not adapt are bringing a knife to a gunfight.

Here is what I see working, where it falls short, and what to keep in mind when the next “AI-powered” product hits your inbox.

Cutting Through the Noise: Triage and Threat Hunting

The most universal pain point in security operations is alert volume. A mid-sized SOC routinely sees tens of thousands of alerts per day across endpoint, identity, network, and cloud telemetry. Most are noise. A handful matter. Analysts burn out trying to tell the difference.

AI is making a real dent here in two ways. First, classifiers and embeddings are layered on top of SIEM and XDR pipelines to cluster similar alerts, suppress duplicates, and surface the small subset that warrants a human eye. Second, language models enrich alerts at the moment of triage. They pull in asset context, prior incidents, threat intel notes, and CVE detail, then produce a short narrative summary an analyst can read in seconds rather than the five minutes it would otherwise take to assemble.

Threat hunting benefits from a different angle. Hunters spend a disproportionate amount of time forming hypotheses, writing queries, and pivoting through data. LLMs translate fuzzy questions like “show me unusual parent-child process relationships on finance servers in the last 30 days” into concrete KQL, SPL, or SQL. That lowers the skill barrier for junior staff and frees senior hunters to focus on the analytical work machines still cannot do well: reasoning about an adversary’s intent and pattern of life inside a specific environment.

The framing I keep coming back to is that AI is not replacing the analyst. It is acting as a tireless intern that does the first pass of summarization, query writing, and correlation, leaving the human to make the call.

Prioritizing the Attack Surface

Vulnerability management has a math problem. A typical enterprise has hundreds of thousands of findings spread across endpoints, web applications, cloud configurations, identities, and code repositories. Patching all of them is impossible. Patching the right ones requires understanding which findings are actually reachable, which ones an attacker would chain together, and which ones are exposed to the internet in a way that makes exploitation realistic.

This is where AI is starting to deliver real leverage. Modern attack surface platforms use machine learning to correlate scan output, asset inventories, identity graphs, and exploit intelligence into prioritized attack paths rather than flat lists of CVEs. Instead of “you have 42,000 vulnerabilities,” a defender sees “these twelve findings, taken together, give an external attacker a credible path to your customer database.” That is a fundamentally different conversation, and one I find resonates with executives in a way a CSV of CVSS scores never has.

I will say this candidly, because it is what we see in our engagements. The vulnerabilities that get exploited in real attacks are rarely the ones with the highest CVSS scores. They are the ones that chain together. AI is finally good at finding those chains at scale, and that is a meaningful upgrade for defense.

LLMs add another layer by reading the raw output of scanners, code reviews, and pentests and translating it into something the asset owner can act on. A finding that used to read like a paragraph of cryptic tool output now arrives as a plain language description of the risk, a suggested remediation, and the relevant code snippet. That dramatically reduces the time between detection and fix.

Faster Incident Response and Forensics

When an incident hits, time is the only currency that matters. Responders are trying to answer four questions at once. What happened. How far did it spread. What data was touched. How do we evict the attacker. Each of those questions historically required hours of manual log review.

AI is compressing that timeline. Models trained on log formats and forensic artifacts can rapidly summarize a host’s activity, flag suspicious sequences, and propose a probable timeline. Investigators still verify the conclusions, but they start from a coherent draft rather than a blank page. For organizations without a mature IR function, this is genuinely transformative. A smaller team can credibly handle a more sophisticated incident.

The same capability extends to phishing defense. Inbound email is now a battle of generative models on both sides. Attackers use LLMs to produce grammatically perfect, contextually relevant lures, often referencing real internal projects scraped from LinkedIn or leaked data. We watch this work in our social engineering engagements. The hit rates on well-tuned, AI-assisted lures are significantly higher than the generic templates of even two years ago. Defenders are responding with models that look at content, header metadata, sender reputation, and the recipient’s behavioral history together. They flag the small anomalies that even a careful human would miss. Awareness training has evolved alongside this. Instead of generic monthly modules, employees can now be tested with simulated lures personalized to their role, generated on demand.

The Honest Limits

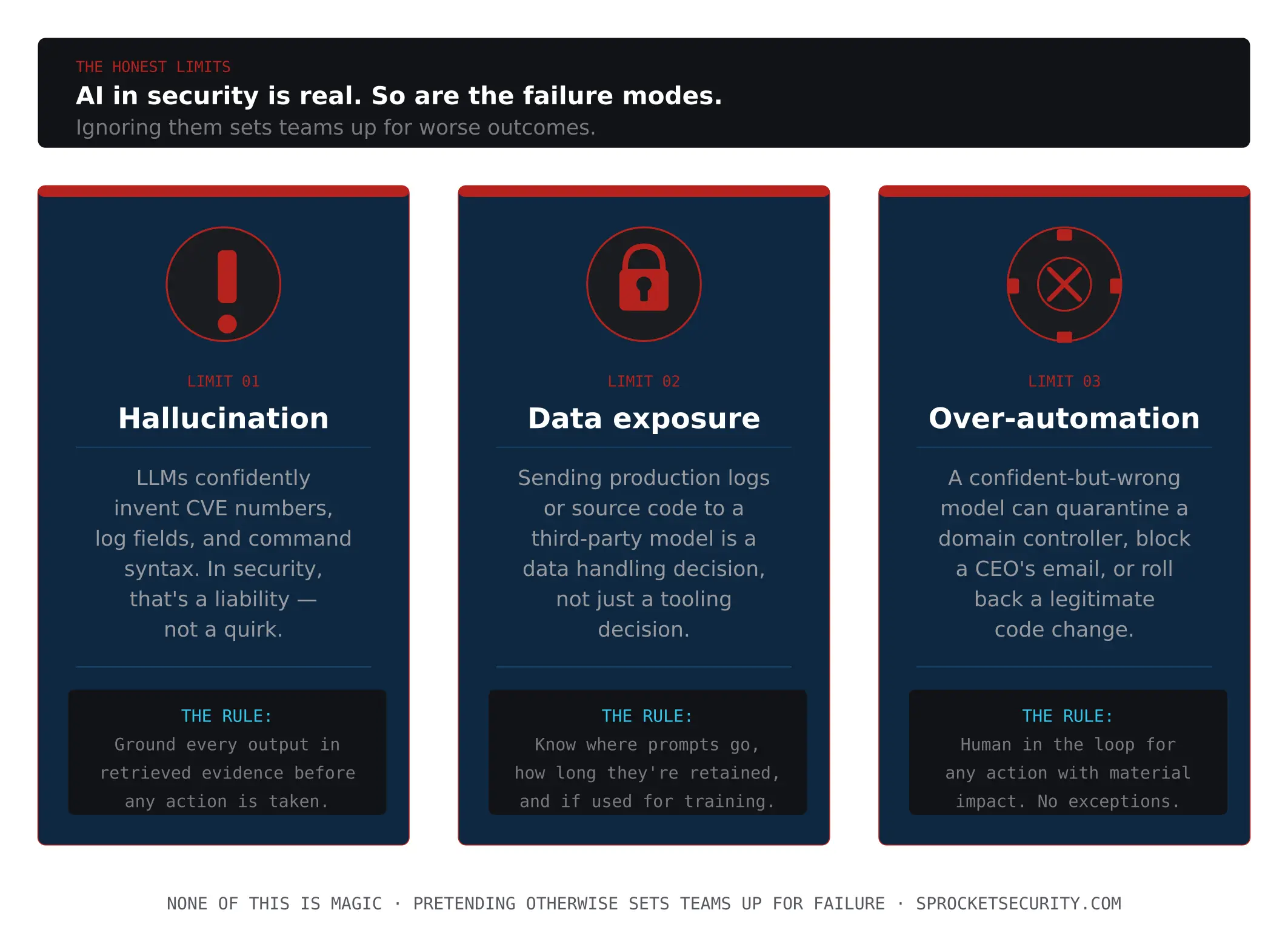

None of this is magic, and pretending otherwise sets teams up for failure. Three limits are worth taking seriously.

The first is hallucination. Language models will confidently invent CVE numbers, log fields, and command syntax. In a security context, that is not a quirky failure mode. It is a serious liability. Any AI output that informs a containment, blocking, or remediation decision must be grounded in retrieved evidence and verified before action.

The second is data exposure. Sending production logs, source code, or sensitive incident detail to a third-party model is a data handling decision, not just a tooling decision. Teams should know exactly where their prompts go, how long they are retained, and whether they are used for training. Many organizations now run sensitive workloads against private model deployments precisely for this reason.

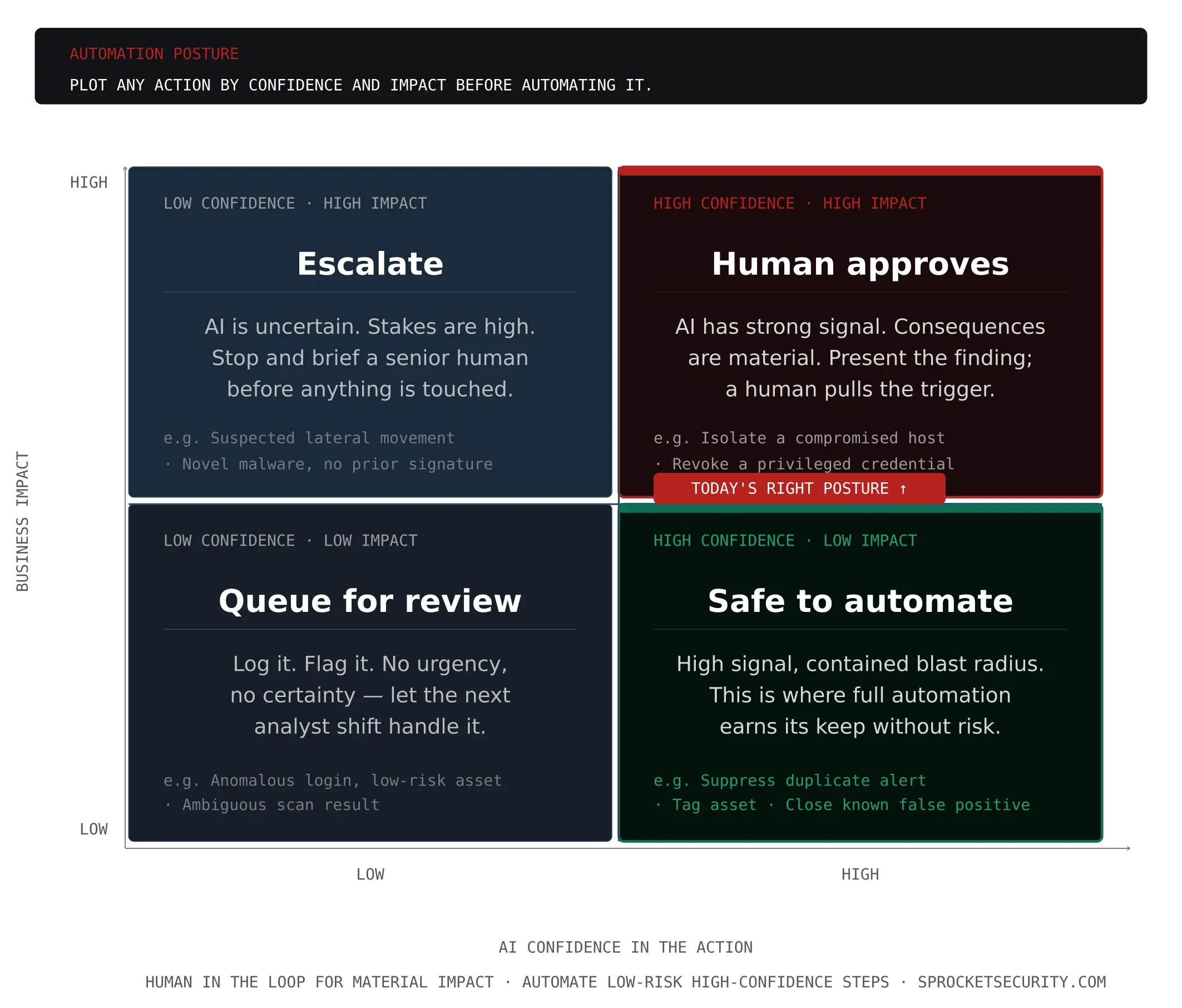

The third is over-automation. The temptation to let an AI agent autonomously triage, contain, and remediate is strong because the labor savings are obvious. The risk is equally obvious. A confident but wrong model can quarantine a domain controller, block a CEO’s email, or roll back a legitimate code change. The right posture today is human in the loop for any action with material impact, with full automation reserved for low-risk, high-confidence steps.

There is also a strategic limit worth naming. The same capabilities that help defenders are available to attackers, often more cheaply, because adversaries do not have to worry about compliance, accuracy, or auditability. The asymmetry will not go away. Defenders win by being faster at adopting these tools responsibly, not by hoping attackers will not.

What This Means for Security Leaders

For leaders evaluating where to invest, three principles travel well. Treat AI as an accelerator for the people you already have, not a replacement for them. The teams that win with AI are the ones whose senior practitioners shape how it is used. Insist on grounding and explainability. Any tool that cannot show its work is a liability in an incident. Measure outcomes, not adoption. Alerts triaged per analyst, mean time to detect, mean time to remediate, and percentage of critical findings closed within SLA are the numbers that should move.

The other thing I would offer, from spending my days looking at customer environments through an attacker’s lens, is this. AI is most valuable to defenders when it is paired with a realistic picture of how those environments actually get attacked. A model can only prioritize what it is told to prioritize. If your understanding of your exposure is stale, your AI-assisted defense will be efficiently aimed at the wrong things. Continuous validation, whether through internal red teaming, continuous pentesting, or attack path analysis, is what keeps the model grounded in the threats that actually matter to you.

The defenders who will thrive over the next few years are not the ones who buy the most AI products. They are the ones who treat AI as a discipline integrated into their detection engineering, their hunting program, their vulnerability prioritization, and their IR playbooks, and who keep humans firmly in the loop for the decisions that matter.

The tools have caught up to the marketing. The advantage now goes to the teams that put them to work.